TL;DR:

- Small deviations in assay accuracy can significantly impact patient safety and research reproducibility. Laboratory standards like ISO/IEC 17025 and traceability ensure measurement reliability, which is essential for regulatory compliance and data integrity. Implementing rigorous quality controls, proper materials, and fostering a culture of shared responsibility are crucial for minimizing errors in lab workflows.

A 1% deviation in assay accuracy is enough to alter dosage recommendations and, ultimately, affect patient safety. That single figure reframes what many laboratory professionals treat as acceptable margin. In high-throughput research environments across the UK and Europe, where independent researchers and laboratory managers operate under increasing regulatory scrutiny and resource constraints, measurement accuracy is not a procedural nicety. It is the structural foundation on which reproducible, defensible research is built. This guide examines the real cost of inaccuracy, the standards that govern measurement reliability, the material properties that protect experimental integrity, and the practical strategies that reduce error at every stage of the research workflow.

Table of Contents

- Why small errors in the lab have big consequences

- The foundation: standards, traceability, and regulatory compliance

- Bacteriostatic water and reagent quality: getting the basics right

- Reducing human and process errors: practical lab strategies

- The uncomfortable truth: why accuracy is everyone’s responsibility

- Take accuracy further in your lab

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Tiny errors, big risks | Even a 1% measurement deviation can alter scientific results or impact patient safety. |

| Standards and compliance | ISO/IEC 17025 accreditation and traceability are vital for laboratory credibility and regulatory acceptance. |

| Reagent quality matters | Only high-quality, certified reagents like research-grade bacteriostatic water ensure consistent and safe outcomes. |

| Workflow vigilance | Auto-verification, standardized labeling, and routine training minimize errors and protect research integrity. |

| Accurate culture | Daily vigilance from every team member is more effective than relying solely on compliance checks. |

Why small errors in the lab have big consequences

Understanding laboratory accuracy requires a clear distinction between two related but separate concepts: accuracy and precision. Accuracy refers to how closely a measured value matches the true value of the quantity being measured. Precision, by contrast, refers to the reproducibility of measurements taken under identical conditions. High precision with low accuracy indicates a systematic calibration offset; high accuracy with low precision points to instability in the measurement system. Both parameters are required for results that can withstand scientific and regulatory scrutiny.

The consequences of confusing or neglecting either parameter are well documented. Accurate laboratory measurements ensure reproducibility of experiments, a cornerstone of the scientific method, and support reliable decision-making in pharmaceuticals where even a 1% deviation can impact dosage and patient safety. That is not a theoretical risk. In peptide reconstitution workflows, for example, a small deviation in water purity or reagent concentration can shift the effective molarity of a solution far enough to invalidate an entire assay series.

Audit data provides concrete scale to this problem. A 10-year clinical biochemistry audit across 4.72 million samples identified 200 errors, representing an error rate of 0.004%. Of those errors, 65% were post-analytical and typographical in nature, while 23.5% had direct clinical impact. That fraction appears small, but applied to a multi-million sample volume, it represents a significant number of affected outcomes.

“Even a vanishingly low error rate, when applied at scale, produces a meaningful number of consequential events. This is why error prevention cannot be treated as a low-priority maintenance task.”

The practical consequences of laboratory inaccuracy fall across several categories:

- Dosing errors in pharmaceutical research, where reconstituted peptide concentrations deviate beyond acceptable tolerances

- Research irreproducibility, which wastes time, funding, and delays publication or regulatory submission

- Regulatory setbacks, particularly in EU and UK GMP-adjacent workflows where traceability failures invalidate entire study arms

- Supply chain rejection, when test results lack the calibration records required for cross-lab or partner acceptance

Implementing rigorous quality testing protocols and reviewing quality control tips for systematic error prevention are practical first responses to these documented risks.

Pro Tip: Schedule calibration checks for all critical instruments on a fixed interval rather than a reactive basis. Instruments can drift gradually enough that individual operators do not notice the shift, a phenomenon sometimes called “silent drift,” yet cumulative deviation still compromises data integrity across experiments.

The foundation: standards, traceability, and regulatory compliance

Having established that small errors carry outsized consequences, it is essential to understand the regulatory and metrological frameworks that exist to prevent them. Chief among these is ISO/IEC 17025, the internationally recognized standard for testing and calibration laboratory competence. In the UK and Europe, accreditation under this standard is administered by UKAS (United Kingdom Accreditation Service) and equivalent national bodies.

ISO/IEC 17025 accreditation ensures competence, impartiality, and consistent operation, making it a prerequisite for regulatory compliance, supply chain acceptance, and the production of traceable measurements. Laboratories that operate without this accreditation may produce internally consistent data, but that data lacks the formal traceability chain required for acceptance by external partners, regulatory agencies, or publication reviewers applying rigorous methodological standards.

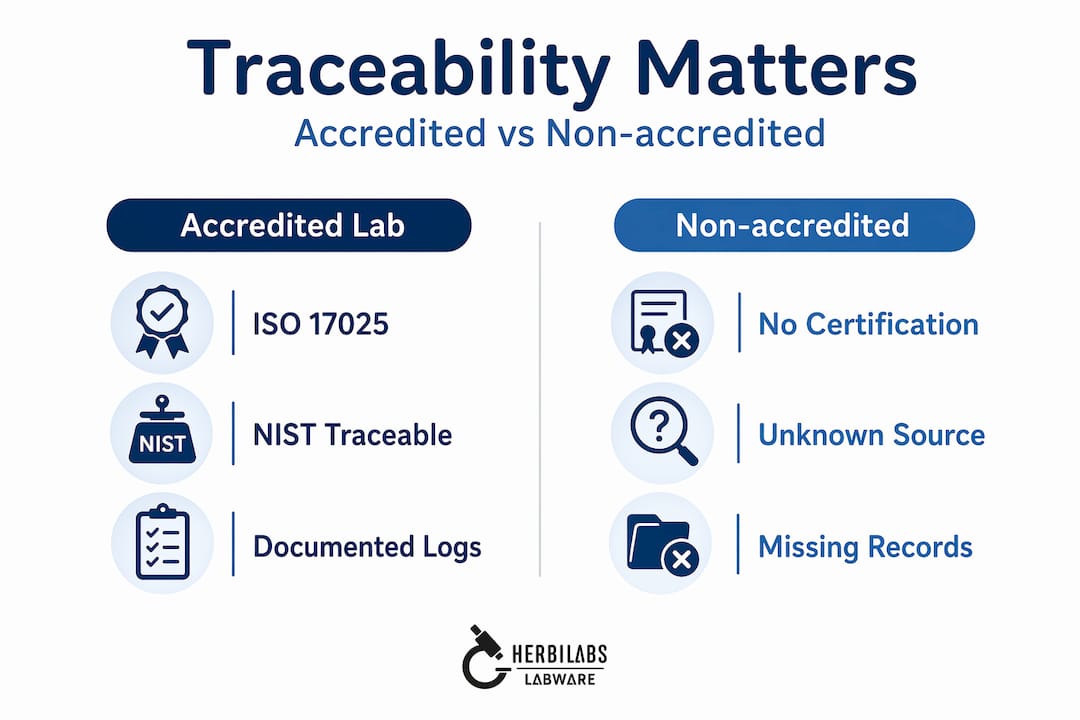

The practical differences between accredited and non-accredited laboratories are significant:

| Parameter | Accredited laboratory | Non-accredited laboratory |

|---|---|---|

| Calibration traceability | NIST/UKAS linked, documented | Variable, often unverifiable |

| Error rate accountability | Formal audit trail | Informal or absent |

| Regulatory acceptance | High (UK MHRA, EMA) | Low to conditional |

| Supply chain credibility | Recognized by partners | Requires additional validation |

| Cross-lab reproducibility | Supported by shared standards | Case-by-case negotiation |

| Quality system documentation | Mandatory, version-controlled | Optional |

Traceability is the backbone of this framework. In laboratory science, traceability/05%3A_Lab_Technician%27s_Guide_to_Accuracy_Precision_and_Reliability) refers to the unbroken chain of calibration that links a laboratory measurement back to a primary reference standard, typically maintained by national metrology institutes such as NIST (National Institute of Standards and Technology) in the United States, or the National Physical Laboratory (NPL) in the UK. Without that chain, a measurement result is isolated: it may appear correct, but it cannot be formally compared against results from other instruments, labs, or time points.

For independent researchers operating outside institutional laboratory infrastructure, ISO 17025 and compliance requirements may seem remote. However, the principles they encode—documented calibration, defined uncertainty budgets, and traceable reference materials—apply at every scale. Consulting a quality control checklist and reviewing quality control in labs resources helps operationalize these standards even without full institutional accreditation.

Pro Tip: Maintain calibration logs for every critical instrument, including pipettes, balances, pH meters, and spectrophotometers. These records are mandatory for formal audits, but they also serve a practical function: they allow you to detect instrument degradation trends before they affect experimental data.

Bacteriostatic water and reagent quality: getting the basics right

With standards and traceability established, the next layer of accuracy assurance concerns the physical materials used in experiments. Among these, bacteriostatic water (sterile water containing 0.9% benzyl alcohol as a preservative) is foundational in peptide reconstitution and multi-use injection workflows. Its properties directly affect the stability, sterility, and reproducibility of reconstituted reagents.

Research-grade bacteriostatic water with 0.9% benzyl alcohol, verified sterility, low endotoxin levels, and a lot-specific Certificate of Analysis (CoA) ensures consistent reconstitution of reagents, reducing contamination and variability in multi-use workflows for independent researchers. The CoA is not a formality. It is the documentary link between the product batch and its verified specification, equivalent in function to the traceability chain described in the standards section above.

The following table outlines the key specification differences between high-purity research-grade water and substandard alternatives:

| Specification | Research-grade water | Substandard water |

|---|---|---|

| Benzyl alcohol concentration | 0.9% (USP grade) | Variable or absent |

| Sterility | Validated, sterile-filtered | Not guaranteed |

| Endotoxin level | Less than 0.25 EU/mL | Uncontrolled |

| pH range | 4.5 to 7.0 | Unspecified |

| Lot-specific CoA | Provided with each batch | Absent or generic |

| Container integrity | Sealed vial with septum | Variable |

These differences are not cosmetic. Endotoxin contamination, for example, can activate immune responses in biological assays, skewing cytokine readouts and invalidating comparisons across experimental groups. An unverified pH range can destabilize lyophilized peptides during reconstitution, altering their bioactivity before any measurement is even taken.

Researchers can use the following numbered checklist to assess water and reagent suitability before use:

- Verify the lot-specific CoA against the labeled product batch number, confirming benzyl alcohol concentration and endotoxin values.

- Check the sterility validation method stated on the CoA, and confirm the product was manufactured under appropriate cleanroom conditions.

- Inspect container integrity before opening, including the septum and vial seal, for any signs of compromise.

- Confirm the pH range is appropriate for the peptide or reagent being reconstituted, consulting the reagent’s technical data sheet.

- Review expiration dates and storage requirements, ensuring cold chain compliance where required.

- Record the lot number and expiration in your lab notebook or digital ELN before use, creating a traceable record of materials used in each experiment.

Guidance on water quality control tips provides additional detail on storage and handling, and high-purity reconstitution solutions outlines the product specifications to prioritize. Laboratories seeking infrastructure-level purity control may also benefit from reviewing leading water purification systems for in-house preparation.

Pro Tip: Never reuse diluents across experiments without first confirming sterility and expiration. Cross-contamination from repeated needle entry into a multi-use vial is more common than most researchers acknowledge, particularly in high-frequency reconstitution settings.

Reducing human and process errors: practical lab strategies

Armed with the right materials and a clear understanding of standards, the next critical step is minimizing human and process-related errors. Audit data frames the problem clearly. In a 10-year audit of clinical biochemistry samples, 65% of errors were post-analytical and typographical, meaning they occurred after the measurement itself, during transcription, reporting, or data entry. Yet 23.5% of all identified errors had clinical impact. Auto-verification implementation in the same audit improved verification coverage from 35% to 62%, directly reducing the error rate.

“Auto-verification software, when correctly configured, acts as a systematic barrier between raw instrument output and the reported result, catching the transcription and transposition errors that human reviewers consistently miss under high-throughput conditions.”

The lesson for independent laboratories and research units is direct: process-level controls reduce error rates more reliably than individual vigilance alone. The following strategies target the error types most commonly observed in audit data:

- Standardized labeling protocols: Use consistent label formats for all samples, reagents, and vials, including lot number, concentration, preparation date, and operator initials.

- Double-entry verification: For manually entered data, require independent re-entry by a second operator before the result is recorded as final.

- Auto-verification rules: Configure LIMS (Laboratory Information Management Systems) to flag results outside defined reference ranges for human review before release.

- Routine team training: Conduct structured refresher sessions on common error sources, not just initial onboarding, to maintain consistent awareness across staff.

- Peer review of unexpected results: Establish a clear protocol for what constitutes an anomalous result and require a second opinion before acting on it.

- Environmental controls: Ensure power protection in labs is maintained to prevent data loss or instrument malfunction during power fluctuations, which can introduce silent errors into automated systems.

Maintaining labware integrity through proper storage, handling, and inspection procedures is a parallel requirement. Even accurate measurements become meaningless if the labware introducing the sample to the instrument is compromised.

The uncomfortable truth: why accuracy is everyone’s responsibility

There is a persistent and damaging assumption within laboratory culture: that accuracy is primarily the responsibility of quality managers, accreditation auditors, or principal investigators. This assumption is incorrect, and it creates predictable blind spots.

External audits operate on a schedule. They inspect a sample of records, not every measurement, and they evaluate documented procedures rather than daily habits. A laboratory that performs well on a UKAS audit but lacks a daily culture of accuracy checking is not, in fact, a reliable laboratory. It is one that has learned to prepare for inspection. These two things are not the same.

Real accuracy depends on labware purity and standards at the supplier level, but it also depends on every individual in the workflow understanding their role. The junior researcher who transcribes a result, the technician who prepares a reconstitution, and the manager who signs off on a CoA review all carry accountability for the final output.

The most resilient laboratories we observe are not necessarily the most heavily audited. They are the ones where cross-training is standard, peer checking is normalized rather than seen as distrust, and unexpected results are treated as diagnostic signals rather than inconveniences. These cultural practices catch the silent errors that top-down quality mandates consistently miss.

Supplier transparency is equally critical. A reagent supplier that provides full lot-specific documentation, validated manufacturing conditions, and traceable purity data is not simply meeting a compliance threshold. They are actively participating in the researcher’s accuracy chain. Choosing suppliers who treat CoA documentation as a genuine quality artifact, rather than a routine checkbox, has a direct effect on experimental reproducibility.

The uncomfortable conclusion is simple: no quality program, however well designed, compensates for a laboratory culture in which accuracy is delegated upward and assumed to be someone else’s problem.

Take accuracy further in your lab

For researchers who recognize the gaps described in this guide, the next step is translating that recognition into practical action. At Herbilabs, we supply research-grade bacteriostatic water and reconstitution solutions manufactured to strict purity standards, with full lot-specific CoA documentation for every batch.

Our product range and educational resources are specifically designed to support independent researchers and laboratory managers in the UK and Europe who cannot afford variability in their diluents or reagents. Whether you are reviewing high-purity reagents to understand the quality markers that matter, working through guidance on selecting laboratory reagents for peptide research, or building your procurement standards using a reliable labware checklist, Herbilabs provides the resources and the products to back every accuracy commitment you make in the lab.

Frequently asked questions

What is the difference between accuracy and precision in the laboratory?

Accuracy measures how close a result is to the true value, while precision measures how reproducible results are across repeated measurements. As noted in accuracy vs. precision literature, high precision with low accuracy typically signals a calibration problem, while high accuracy with low precision points to system instability.

Why is ISO/IEC 17025 accreditation important for laboratories?

ISO/IEC 17025 accreditation demonstrates that a laboratory is technically competent, impartial, and produces measurements traceable to recognized standards, which is required for regulatory compliance and supply chain acceptance across the UK and EU.

How can researchers reduce errors in laboratory workflows?

Researchers can reduce errors through auto-verification systems, standardized labeling, double-entry data checks, and routine training. Audit data shows that auto-verification implementation improved error-detection coverage from 35% to 62% in a 10-year clinical biochemistry study.

What properties should researchers look for in bacteriostatic water?

Researchers should confirm 0.9% benzyl alcohol concentration, validated sterility, endotoxin levels below 0.25 EU/mL, and an appropriate pH range. A lot-specific CoA for every batch is the minimum acceptable documentation standard.

What are the most common types of laboratory errors?

The majority of laboratory errors are post-analytical and typographical in nature, occurring after measurement during transcription or reporting. However, 23.5% of identified errors carry direct clinical impact, which underscores why even rare errors require systematic controls.