TL;DR:

- Trace contaminants in laboratory water are a significant but often underestimated source of research failure, especially in trace-level analyses. Selecting the correct water grade based on ASTM standards is essential, as impurities like dissolved ions, organics, and particulates can interfere with sensitive assays, degrading data quality and reproducibility. Ensuring water purity compliance through rigorous monitoring and proper rinsing protocols is critical for maintaining reliable results and meeting UK and European accreditation standards.

Trace contaminants in laboratory water are one of the most underestimated sources of research failure, yet many scientists operating in well-equipped facilities still assume that any purified or deionized water is functionally interchangeable. This assumption is incorrect and, in analytical contexts such as peptide synthesis, HPLC, or LC-MS, it can lead to data that is irreproducible, invalid, or misleading. Understanding the distinctions between reagent water grades, their specific performance benchmarks, and the compliance requirements attached to their use is not optional for researchers working at trace-level sensitivity. This article covers ASTM water purity grades, contamination risks, regulatory obligations for UK and European laboratories, and validated practices for preventing water-based interference in sensitive assays.

Table of Contents

- How water purity directly influences research results

- The science of water grades: Matching purity to laboratory application

- Water quality and lab compliance: Meeting UK and European standards

- Final-rinse water and contamination control: Preventing hidden risks

- Why most labs underestimate water purity risks

- Find trusted high-purity water solutions for your research lab

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Water purity preserves data | Choosing the right water grade is critical for reproducible, trustworthy scientific results. |

| Compliance is mandatory | UK and European labs must meet strict standards for water testing and documentation. |

| Final rinse prevents contamination | Even trace metals from cleaning cycles can sabotage sensitive assays without ultrapure water. |

| Match grade to the task | ASTM Type I, II, or III waters each suit specific lab roles—always verify before use. |

How water purity directly influences research results

With that challenge in mind, let’s clarify exactly how water purity can make or break high-stakes experiments.

Laboratory water purity refers to the measured absence of dissolved ions, organic compounds, particulates, microbial content, and dissolved gases in a water source intended for analytical or research use. The most widely recognized classification system is defined by ASTM International under standard D1193, which divides reagent water into three primary grades based on resistivity, total organic carbon (TOC), and microbial content. Each grade corresponds to a defined set of analytical applications, and selecting the correct grade is a fundamental step in method development and quality assurance.

The direct impact of water impurities on research outcomes is well established. Dissolved ions, such as sodium, chloride, and calcium, can interfere with ion-sensitive electrodes, affect buffer chemistry, and skew spectrophotometric baselines. Trace organics raise background signals in UV absorbance and fluorescence measurements. Particulates can block chromatography columns or alter cell culture conditions. In peptide research specifically, metal ion contamination can catalyze oxidation of sensitive residues such as methionine and cysteine, directly degrading the sample.

Choosing the correct reagent water grade is essential so that the water chemistry matches the method’s sensitivity, because using the wrong grade can compromise results. This principle holds across food safety testing, environmental analysis, and biochemical research, though it is particularly critical in peptide-focused laboratories where analyte concentrations are often in the nanomolar range.

Key impurity categories and their research impacts:

- Dissolved ions: Alter ionic strength, disrupt mass spectrometry calibration, and interfere with enzymatic assays

- Total organic carbon (TOC): Raises UV baseline in HPLC analysis, creating false peaks or suppressing signal resolution

- Particulates: Cause column plugging in LC systems and may introduce artifacts in microscopy

- Microbial contamination: Introduces endotoxins and DNase/RNase activity that can degrade biological samples

- Dissolved silica: A common trace contaminant from glass-lined systems that can interfere with inductively coupled plasma mass spectrometry (ICP-MS)

Statistic: Resistivity, expressed in megaohm-centimeters (MΩ·cm), is the most widely used indicator of ionic purity. Ultrapure water at theoretical maximum purity reaches 18.2 MΩ·cm at 25°C. Water at 1.0 MΩ·cm contains roughly 18 times more ionic contamination, a difference that is decisive in trace-level work.

Reviewing labware purity standards is an important starting point for teams building or auditing their water quality protocols, particularly when expanding into new analytical techniques. Similarly, embedding water quality into routine lab quality control tips ensures that purity monitoring becomes part of the standard operating environment rather than an afterthought.

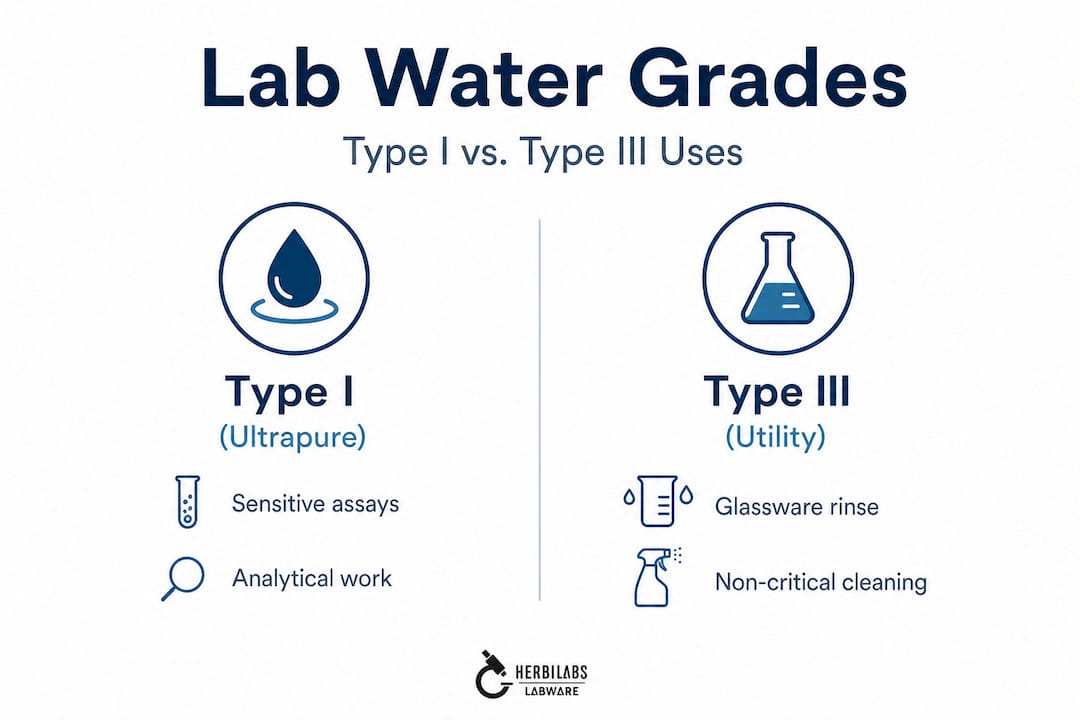

Pro Tip: Never use Type III water for analytical work. Reserve it strictly for non-critical tasks such as initial glassware rinsing or autoclave feeds. Introducing Type III water into an HPLC system or reconstitution workflow is one of the fastest ways to generate unreliable data.

The science of water grades: Matching purity to laboratory application

To apply these principles in your own lab, you first need to navigate the world of water grades.

ASTM D1193 is the definitive reference standard for reagent water classification in analytical laboratories. It establishes performance criteria for four grades of water, though Types I, II, and III are the most commonly applied in practice. The standard is not merely a recommendation. It defines the functional boundaries of water quality relative to analytical method sensitivity, making it a critical reference for method validation documentation.

ASTM D1193 water grade comparison:

| Parameter | Type I (ultrapure) | Type II (general lab) | Type III (utility) |

|---|---|---|---|

| Resistivity (MΩ·cm at 25°C) | ~18.2 | >1.0 | >0.05 |

| TOC (ppb) | <50 | <50 | Not specified |

| Microbial limit (CFU/mL) | <1 | <10 | <1000 |

| Recommended use | HPLC, LC-MS, ICP-MS, peptide synthesis | Buffer preparation, media, general reagents | Glassware rinsing, autoclave feed |

| Risk if misused | Method failure, false data | Ion interference in sensitive assays | Direct analyte contamination |

Lab Manager summarizes ASTM D1193 reagent-water grading by resistivity and TOC: Type I ultrapure water has ~18.2 MΩ·cm at 25°C and TOC below 50 ppb for trace-level techniques including HPLC, LC-MS, and ICP-MS; Type II is suited to general tasks with resistivity above 1.0 MΩ·cm; and Type III is appropriate only for non-analytical applications such as glassware rinsing. This graded approach allows laboratories to optimize cost and risk management simultaneously.

Selecting the appropriate water grade for a given application involves more than consulting a single table. The process requires a structured assessment of method sensitivity, sample matrix, and downstream analytical instrument requirements. The following steps provide a practical framework:

- Define the method’s lowest required detection limit. Techniques operating below 1 ppb analyte concentration almost universally require Type I water in all sample preparation steps.

- Identify all contact points between water and the analytical pathway. This includes mobile phase preparation, standard dilutions, reconstitution of lyophilized peptides, and equipment wash cycles.

- Assess the instrument’s baseline sensitivity. Mass spectrometers and fluorescence detectors are far more sensitive to organic background than UV detectors at 280 nm, requiring correspondingly higher water purity.

- Review the reagent grade requirements in the validated method documentation. If a pharmacopoeial or published method specifies a water grade, that specification must be treated as a minimum, not a guideline.

- Document the water source, purification system, and monitoring frequency for each analytical step. This documentation is required for audit trails under ISO/IEC 17025 and is directly relevant to the accreditation assessments reviewed in the next section.

Understanding reagents grades explained in the context of water selection is essential when designing or revising analytical methods, particularly for peptide characterization workflows that involve multiple reagent inputs. For teams sourcing reconstitution media, reviewing high-purity reconstitution options provides a practical bridge between water quality theory and product selection.

Pro Tip: A common mistake in peptide synthesis is preparing mobile phases with Type II water while using Type I for the synthesis step itself. If your mobile phase contains elevated ion or organic content, it will contaminate the column and the analytical results downstream. Use Type I water at every stage of the critical pathway.

Water quality and lab compliance: Meeting UK and European standards

Once you’re clear on the technical side, it’s essential to understand the legal and regulatory requirements, especially in the UK and Europe.

For accredited laboratories operating under ISO/IEC 17025, water purity is not an optional quality parameter. It is a documented, auditable component of the laboratory’s quality management system. The standard requires that laboratories identify all factors affecting measurement uncertainty, and since water quality directly influences analytical uncertainty, its monitoring must be formalized, recorded, and demonstrable during external audits.

In the UK, accreditation is overseen by UKAS (United Kingdom Accreditation Service), and its water microbiology update confirms that accreditation and compliance frameworks require laboratories to implement appropriate testing and monitoring methods for water quality and microbiology, making water purity part of a documented, auditable quality system. This expectation extends to microbial monitoring, TOC tracking, and resistivity logging, all of which must be conducted at defined intervals and retained as objective evidence.

European laboratories operating under EN ISO/IEC 17025:2017 face equivalent requirements. Many also contend with sector-specific guidance from bodies such as EURACHEM, which issues technical guidance on measurement uncertainty and traceability that directly implicates water quality as a variable requiring control and documentation.

Practical steps to demonstrate water purity compliance during an audit:

- Maintain a water quality logbook that records daily or per-use resistivity and TOC readings, calibration dates for monitoring instruments, and any corrective actions taken when values fall outside acceptance criteria

- Document the purification system’s maintenance schedule, including filter replacement, UV lamp change intervals, and membrane regeneration cycles

- Validate water purity at the point of use, not only at the purification unit outlet, because recontamination can occur in storage vessels or delivery tubing

- Define written acceptance criteria for each water grade in use, tied to specific methods or instruments in your standard operating procedures (SOPs)

- Retain water quality records for the minimum period required by your accreditation body, typically a minimum of five years under most ISO-aligned quality management frameworks

- Train all relevant personnel on water grade selection requirements and the documentation obligations attached to each analytical application

Consulting lab water quality tips provides additional practical detail on structuring monitoring programs, while the water purity consistency guide addresses the specific challenge of maintaining consistent purity over time in high-throughput research environments.

Final-rinse water and contamination control: Preventing hidden risks

Contamination risks don’t end with water selection. Glassware rinsing is a critical, often overlooked factor.

Even when analytical methods specify Type I water for sample preparation, laboratories frequently overlook the purity requirements for the final rinse stage of glassware cleaning. This is a significant oversight because residual contaminants left on glassware surfaces from insufficient rinsing will transfer directly into the next sample or reagent placed in that vessel. The effect is particularly pronounced for trace metal analysis, where nanogram-level carryover can exceed acceptable detection limits.

Controlling final-rinse water quality in glassware cleaning is critical to prevent metal contamination carryover, a requirement that applies equally to peptide and biochemistry assays sensitive to low-level ionic or organic interference. Metal ions such as iron, zinc, copper, and lead adsorb readily onto glass surfaces and are not removed by standard detergent wash cycles. Only high-purity final-rinse water, typically Type I or equivalent, can displace these residues effectively.

Validated contamination control protocols for glassware rinsing typically include the following elements:

- Acid rinsing as a pre-wash step, using dilute nitric or hydrochloric acid to dissolve metal deposits before the main cleaning cycle, particularly relevant for glassware used in trace metal or peptide metal-binding studies

- Staged rinsing with progressively purer water, moving from tap water or Type III to Type II and finally Type I in the terminal rinse to minimize cross-contamination between rinse stages

- Post-rinse resistivity checks using a handheld or inline meter to confirm that the final rinse water meets specifications at the point of contact with the glassware

- Cycle documentation, recording water grade, rinse duration, and verification readings for each cleaning batch as part of the laboratory’s quality records

- Periodic recovery experiments, spiking clean glassware with a known concentration of target analyte after rinsing and analyzing for residual contamination to validate the cleaning protocol’s effectiveness

Reviewing labware rinse protocols provides detailed procedural guidance on implementing and validating rinse cycles for different vessel types and analytical applications.

Why most labs underestimate water purity risks

In our experience supporting research teams across the UK and Europe, one pattern emerges with remarkable consistency: the laboratories that encounter the most persistent reproducibility problems are rarely those with inadequate instrumentation or insufficient technical expertise. They are, far more often, facilities where water quality has been treated as a background assumption rather than a managed variable.

The troubleshooting process in most labs follows a predictable sequence. When results are inconsistent or out-of-specification, analysts first examine reagent preparation, column condition, detector calibration, and sample handling. Water quality is checked last, if at all, typically after several days of unproductive investigation. This sequencing is largely habit, but it has real cost implications in time, consumables, and delayed research output.

What makes water contamination particularly difficult to detect is that it rarely produces a dramatic, obvious failure. Instead, it degrades signal-to-noise ratio incrementally, shifts baseline absorbance slightly, or introduces low-level ionic interference that inflates measurement uncertainty without triggering an immediate out-of-specification flag. Researchers working with peptide purity challenges are especially vulnerable to this type of silent error because their analytes are often structurally sensitive to the exact ionic and oxidative conditions of the solvent environment.

The practical recommendation is straightforward: start every troubleshooting process with water. Verify resistivity, TOC, and microbial counts before examining any other variable. This approach may feel counterintuitive to researchers accustomed to treating water as a stable background constant, but it consistently resolves ambiguous failures faster than any other single diagnostic step. Equally important is the recognition that “acceptable” water, meaning water that meets the minimum specification for a given grade, is not always sufficient for cutting-edge methods operating at the limits of instrument sensitivity. As analytical techniques advance and detection limits drop, the specifications that were adequate five years ago may no longer be fit for purpose.

Find trusted high-purity water solutions for your research lab

Managing water purity across analytical workflows requires more than technical knowledge. It requires access to verified, consistently pure products and reliable procedural guidance that translates standards into practice.

At Herbilabs, we supply research-grade bacteriostatic water, sterile diluents, and reconstitution solutions manufactured to strict purity standards and supported by rigorous quality control documentation. Whether you are reconstituting lyophilized peptides, preparing analytical standards, or validating a new method, our high-purity solutions are designed to meet the demands of trace-level research environments. For teams building or auditing their quality systems, our labware purity best practices resource offers structured guidance on grade selection and compliance documentation. Our water quality control tips provide actionable protocols to integrate purity monitoring directly into your standard operating procedures.

Frequently asked questions

What is ASTM Type I water and when should it be used?

ASTM Type I water is ultrapure with resistivity of approximately 18.2 MΩ·cm at 25°C and TOC below 50 ppb, making it the required standard for trace-level analyses including HPLC, LC-MS, and peptide synthesis where ionic or organic background must be minimized.

Does water purity affect glassware cleaning results?

Yes, using insufficiently pure water in final rinses can leave trace metal ions and organic residues on glass surfaces that transfer directly into subsequent samples. Controlling final-rinse water quality is therefore a validated requirement for any laboratory conducting sensitive trace-level analysis.

How do UK and European labs demonstrate water purity compliance?

Accredited laboratories must document ongoing water quality testing and microbiology monitoring within their quality systems, as accreditation frameworks require these records to be auditable and retained according to ISO/IEC 17025 or equivalent national standards.

What’s the risk of using the wrong water grade for experiments?

Using the wrong grade introduces contaminants that invalidate results or make experiments irreproducible, because wrong-grade water compromises results by mismatching the water’s ionic and organic content with the method’s sensitivity requirements, a failure mode that is often difficult to detect without systematic water quality monitoring.