Quality control in laboratories is frequently mischaracterized as a routine administrative task, a series of occasional checks performed to satisfy regulatory auditors. In practice, QC is a structured system designed to detect and correct analytical deficiencies before results are released, encompassing documented processes, real-time statistical monitoring, corrective action protocols, and continuous verification of method performance. For researchers and laboratory managers across the UK and Europe, understanding QC at this level of depth is not optional. It directly determines research credibility, regulatory standing, and the integrity of every data point generated. This guide covers essential definitions, core methodologies, key standards, practical challenges, and actionable strategies for building a reliable quality culture.

Table of Contents

- Defining quality control in lab environments

- Foundational methodologies: Processes, controls, and frequency

- Key standards and benchmarks: ISO 17025, Eurachem, and sigma metrics

- Challenges and edge cases in lab quality control

- Best practices for building robust lab quality culture

- Perspective: Why hybrid and risk-based QC is the next frontier

- Advance your lab’s quality control with Herbilabs

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Comprehensive QC is essential | Rigorous quality control systems are vital for trustworthy lab results and regulatory compliance. |

| Use a blend of methods | Combining statistical controls, regular proficiency testing, and risk-based approaches offers reliable lab performance. |

| Stay updated with standards | Adhering to ISO 17025 and Eurachem guides ensures alignment with UK/EU best practices. |

| Audit and improve continuously | Ongoing reviews and hybrid QC models help identify issues early and enhance lab reliability. |

Defining quality control in lab environments

Now that we understand QC is more than ticking boxes, let’s clarify the key definitions and why they matter. At its foundation, QC detects, reduces, and corrects deficiencies in analytical processes before results are released to end users, whether those users are clinicians, regulators, or fellow researchers. Two concepts sit at the core of this definition: precision and accuracy. Precision refers to reproducibility, the ability to obtain the same result across repeated measurements under identical conditions. Accuracy, sometimes called trueness, describes how closely a result matches the true or reference value.

For UK and EU researchers, QC is not simply good scientific practice. It is a formal obligation governed by frameworks including ISO 17025, UKAS accreditation requirements, and Eurachem guidance documents. Adherence to these frameworks determines whether a laboratory’s outputs are accepted by regulatory bodies, funding agencies, and peer-reviewed journals.

The consequences of inadequate QC extend well beyond failed audits. Consider the following failure scenarios:

- Unreliable analytical data leading to retracted publications and damaged institutional reputations

- Systematic bias in clinical measurements causing patient misdiagnosis or inappropriate treatment

- Reagent contamination undetected through insufficient controls, invalidating entire experimental series

- Non-compliant results triggering regulatory sanctions and suspension of laboratory accreditation

Selecting laboratory reagent standards that are traceable and well-characterized is one foundational step in preventing these outcomes. Similarly, sourcing certified lab supplies with documented purity profiles reduces the probability of matrix-related QC failures from the outset.

“Quality control is not a checkpoint at the end of a process. It is an integrated system of verification woven into every stage of analytical work, from reagent selection to result authorization.”

Foundational methodologies: Processes, controls, and frequency

With definitions set, let’s explore how labs actually implement quality control in practice. Key methodologies include running QC materials at shift starts, after instrument service, following reagent or calibration changes, and at defined batch intervals. This structured frequency ensures that any analytical drift or systematic error is detected before it propagates through a large volume of patient or research samples.

The standard implementation sequence typically follows these steps:

- Run QC materials at the start of each analytical shift to establish baseline performance

- Repeat QC after any instrument maintenance, recalibration, or reagent lot change

- Plot results on Levey-Jennings charts to visualize trends and shifts over time

- Apply Westgard rules to determine whether observed variation represents random error or a systematic problem

- Investigate and document any rule violation before releasing results

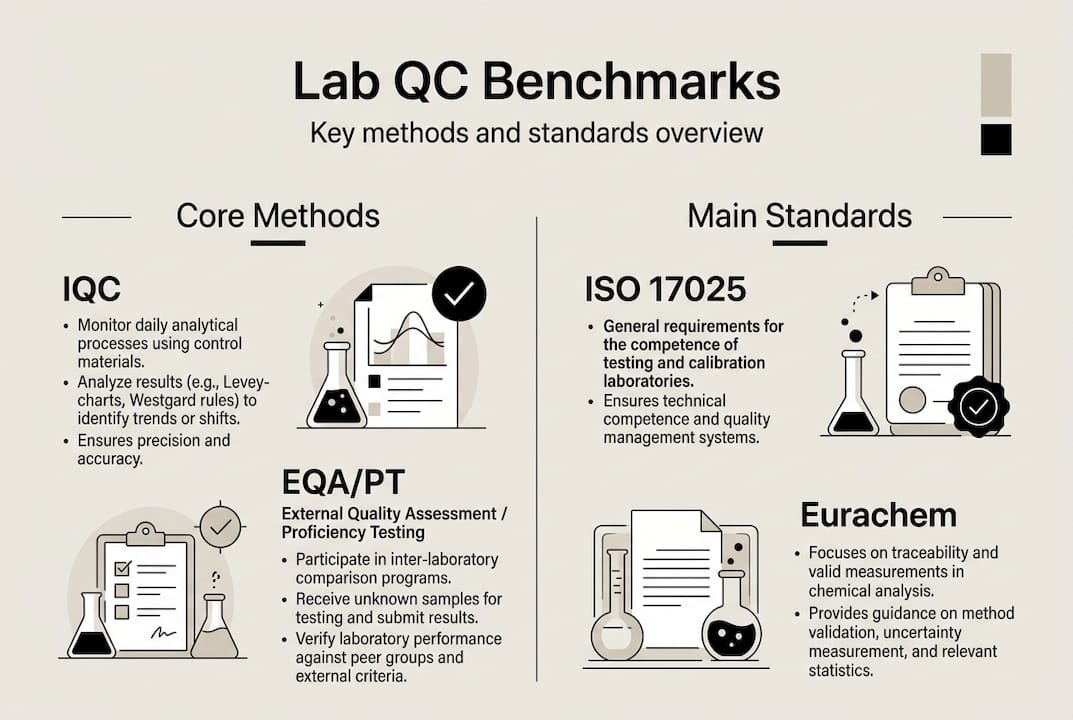

Internal QC (IQC) and external quality assessment (EQA) or proficiency testing (PT) serve complementary but distinct functions. Consulting lab water quality guidance and following reagent handling best practices further supports consistent IQC performance by minimizing pre-analytical variability.

| Parameter | IQC | EQA/PT |

|---|---|---|

| Purpose | Detect day-to-day analytical variation | Compare performance against peer laboratories |

| Frequency | Every shift or batch | Typically monthly to quarterly |

| Control material | In-house or commercial QC materials | Externally distributed samples |

| Primary value | Immediate error detection | Benchmarking and bias identification |

Pro Tip: Validate your QC controls whenever a method undergoes significant change, not only during routine scheduled reviews. Method modifications, even minor ones, can shift the analytical range in ways that existing controls no longer adequately monitor. External manufacturing QC tips from analogous industries reinforce this principle consistently.

Key standards and benchmarks: ISO 17025, Eurachem, and sigma metrics

Now that you know the main tools and routines, let’s see how quality is formally benchmarked and regulated. ISO/IEC 17025 is the core standard for laboratory competence across the UK and Europe, requiring a documented quality management system, validated methods, metrological traceability, defined SOPs, and participation in proficiency testing schemes. UKAS accreditation against this standard is the recognized mechanism for demonstrating technical competence to regulators and commissioning bodies.

Eurachem’s method validation and fitness-for-purpose guides extend these requirements by providing practical frameworks for selecting validation parameters, setting acceptance criteria, and interpreting uncertainty data in context.

Sigma metrics offer a quantitative approach to benchmarking analytical performance. A sigma value is calculated using the formula: sigma = (TEa – bias) / CV, where TEa is the total allowable error, bias is the systematic error, and CV is the coefficient of variation. Sigma metrics quantify lab performance on a universal scale: a value of 6 sigma or above is considered world-class, while values below 3 sigma indicate a process requiring immediate remediation.

Key performance benchmarks for UK and EU laboratories include:

- HbA1c: intra-laboratory CV target of less than 1.5%, with sigma values typically ranging from 4 to 6 in well-managed settings

- Sodium: sigma values frequently exceeding 6 in modern analyzers with appropriate calibration

- Troponin: highly method-dependent, often requiring risk-based QC design due to variable sigma performance

| Analyte | Typical sigma range | QC strategy implication |

|---|---|---|

| HbA1c | 4 to 6 | Multirule QC, frequent monitoring |

| Sodium | Greater than 6 | Simplified rules, less frequent QC |

| Troponin | 2 to 4 | Risk-based design, enhanced frequency |

Reviewing your certificate of analysis documentation and understanding labware purity importance are practical steps that directly support achieving and sustaining these sigma benchmarks. Insights from monitoring quality excellence in manufacturing contexts also translate effectively to laboratory settings.

Challenges and edge cases in lab quality control

While regulations provide structure, practical challenges often test even the best quality control systems. Matrix effects, lot-to-lot variation, and employee override risks are persistent edge cases that can compromise analytical integrity even in accredited laboratories. Matrix effects occur when the composition of a control material differs sufficiently from patient or research samples to produce divergent analytical responses, meaning a control can pass while actual samples yield biased results.

Five real-world scenarios where QC can fail despite apparent compliance:

- Non-commutable control materials that pass QC rules but do not reflect true sample behavior due to matrix differences

- Reagent lot drift, where a new lot performs within acceptance limits initially but shifts progressively over its shelf life

- Staff override of flagged results without documented investigation, allowing biased data to be released

- Infrequent corrective action audits, meaning systematic errors accumulate undetected between formal review cycles

- Calibration traceability gaps, particularly when reference materials are not certified to recognized metrological standards

Furthermore, sigma metrics are not true predictors of failure, and risk-based models aligned with EP23 guidance may provide more meaningful patient safety assurance than statistical thresholds alone. Addressing contamination risks at the reagent preparation stage reduces one significant source of these edge-case failures.

Pro Tip: Schedule quarterly audits specifically targeting edge cases, including override logs, lot-change records, and matrix commutability assessments. These reviews frequently surface issues that routine statistical monitoring misses entirely.

Best practices for building robust lab quality culture

Given these challenges, here’s how lab managers can set up a robust system for long-term quality gains. Prioritizing robust SOPs, continual training, audits, and blending statistical with risk-based QC produces the most resilient outcomes for laboratories operating under ISO 17025 and similar frameworks. Quality culture cannot be mandated through documentation alone. It requires active reinforcement through daily routines, leadership modeling, and structured feedback mechanisms.

Five steps for building a high-reliability QC culture:

- Embed QC into daily workflows by making control runs, result review, and Levey-Jennings chart updates non-negotiable start-of-shift activities

- Develop SOPs that explicitly address edge cases, including procedures for reagent lot transitions, matrix commutability assessments, and staff override protocols

- Conduct regular training cycles that go beyond initial onboarding, incorporating case studies of real QC failures and their root causes

- Integrate risk-based QC design alongside statistical tools, using error severity and patient impact assessments to calibrate control frequency and rule selection

- Establish a feedback loop where audit findings, corrective actions, and near-miss events are systematically reviewed and used to update SOPs

Referencing your aseptic technique checklist during reagent preparation is one practical example of embedding QC principles into pre-analytical workflows, reducing the probability of contamination-related failures before analysis even begins.

“The laboratories that consistently achieve top-tier performance are not those with the most sophisticated instruments. They are those where quality is treated as a shared professional responsibility, not a compliance exercise.”

Perspective: Why hybrid and risk-based QC is the next frontier

Conventional wisdom in laboratory QC has long centered on statistical process control: run controls, apply Westgard rules, and release results when criteria are met. This approach is necessary but increasingly insufficient. Relying on statistical QC alone creates blind spots, particularly for analytes with variable matrix behavior or for errors that fall below detection thresholds of standard rule sets.

Hybrid models, which combine Levey-Jennings statistical monitoring with risk-severity assessments and traceability verification, address these gaps more effectively. UK and EU laboratories are well-positioned to adopt patient-risk and error-severity frameworks derived from EP23 and IFCC critiques of sigma-only approaches. The uncomfortable truth is that regulatory compliance does not equal true analytical reliability. A laboratory can satisfy every ISO 17025 requirement and still release results with clinically meaningful bias if its QC design does not account for real-world error modes.

Practical wisdom: use actual error data from incident logs and corrective action records, not just checklist completion rates, to drive QC system improvements. Reviewing reagent standards and QC documentation as part of this process ensures that upstream material quality is factored into the risk model.

Advance your lab’s quality control with Herbilabs

Equipped with actionable insights, here’s how your lab can access specialized resources and maintain world-class QC. Herbilabs supplies research-grade reagents and reconstitution solutions manufactured to strict purity standards, providing the traceable, contaminant-free materials that robust QC systems depend on.

Exploring our guidance on high-purity reagents gives laboratory managers a clear framework for evaluating how material quality upstream affects QC performance downstream. For teams managing reconstitution workflows, our resource on research water stability addresses a frequently overlooked variable in analytical reproducibility. Our lab consumables comparison guide supports informed procurement decisions aligned with ISO 17025 and Eurachem requirements, helping labs maintain both compliance and analytical confidence.

Frequently asked questions

What is the main goal of quality control in a laboratory?

QC detects and corrects deficiencies in analytical processes before results are released, ensuring outputs are both accurate and precise. Without this structured system, errors can propagate undetected through entire data sets.

How often should QC checks be performed in a lab?

QC checks are run at shift start, after instrument service, and following any reagent or calibration change to maintain continuous analytical oversight. The frequency should also reflect the criticality and risk profile of each analytical method.

What are common challenges in lab quality control?

Matrix effects, lot-to-lot variation, and override risks are among the most persistent threats to QC integrity, requiring proactive auditing and risk-based management strategies beyond routine statistical monitoring.

Why are sigma metrics important in laboratory quality control?

Sigma metrics quantify lab performance by integrating allowable error, bias, and imprecision into a single benchmark, enabling objective comparison of method performance and informing QC rule selection.

How do UK/EU labs achieve ISO 17025 accreditation?

ISO/IEC 17025 requires a QMS, validated methods, metrological traceability, documented SOPs, and participation in proficiency testing schemes to demonstrate technical competence to accreditation bodies such as UKAS.