TL;DR:

- Most scientists fail to replicate results, causing resource waste and eroding trust.

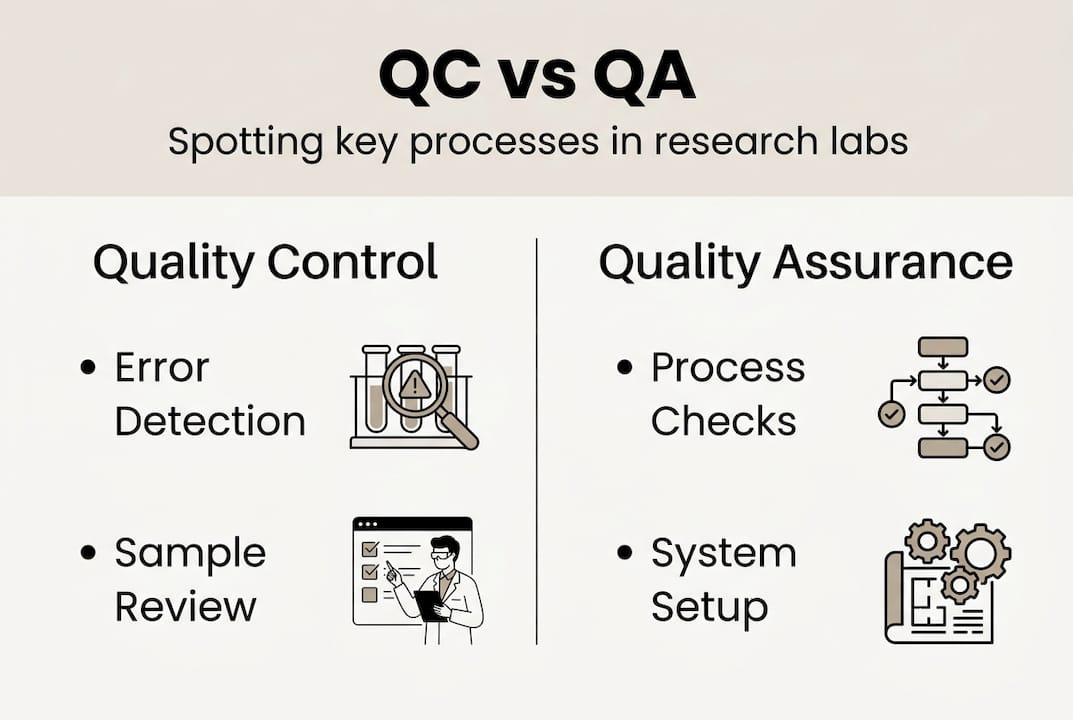

- Quality control detects errors in outputs, while quality assurance builds reliable processes.

- Implementing integrated QA/QC systems with ongoing monitoring improves research reproducibility.

Over 70% of researchers have failed to replicate another scientist’s results, and more than half acknowledge a reproducibility crisis exists in modern science. That figure is not an abstract concern; it represents wasted resources, retracted publications, and eroded institutional trust. Quality control sits at the center of this problem and, more importantly, its solution. This article covers the core definitions, practical frameworks, and daily techniques that independent researchers and laboratory managers need to build reliable, reproducible workflows, from understanding the difference between QC and QA to implementing validated monitoring tools that catch errors before they compromise your data.

Table of Contents

- What is quality control and why does it matter in research?

- Core elements of quality control in research workflows

- Essential QC techniques: From method validation to real-time monitoring

- Common challenges and practical solutions in real-world research settings

- The uncomfortable truth: Why most labs underestimate the power of integrated QA/QC

- Enhance your lab’s quality control with proven solutions

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| QC underpins research trust | Quality control is vital for reliable and reproducible research results. |

| Systems ensure consistency | Implement frameworks like LQMS and ISO 15189 for sustainable laboratory quality. |

| Tools and documentation matter | Use validated methods, regular monitoring, and thorough record-keeping in your QC process. |

| Address challenges proactively | Anticipate reagent changes and integrate continual improvement to keep errors at bay. |

| Integrated QA/QC is key | Sustainable quality requires a blend of assurance, control, and ongoing improvement. |

What is quality control and why does it matter in research?

Quality control and quality assurance are terms that are frequently used interchangeably, yet they describe fundamentally different functions within a research environment. Understanding the distinction is the first step toward building a system that actually works.

Quality assurance (QA) is process-oriented and preventative, while quality control (QC) is product-oriented and detective. QA establishes the conditions under which reliable results can be produced, covering standard operating procedures (SOPs), staff training, and equipment maintenance schedules. QC, by contrast, evaluates the outputs of those processes, checking whether a specific measurement, batch, or result meets predefined acceptance criteria.

| Feature | Quality assurance (QA) | Quality control (QC) |

|---|---|---|

| Orientation | Process | Product/output |

| Function | Preventative | Detective |

| Focus | System design | Error detection |

| Examples | SOPs, training programs | Control charts, retesting |

| Timing | Before and during work | During and after work |

The practical importance of QC for data reproducibility becomes clear when you examine the scale of the problem. Replication studies consistently find that effect sizes in replicated experiments are approximately 60% smaller than in original studies, and fewer than 50% of published findings hold up under independent scrutiny. These numbers reflect what happens when QC is treated as optional rather than structural.

“The reproducibility crisis is not a failure of individual scientists. It is a failure of systems that lack rigorous, integrated quality control at every stage of the research process.”

Poor QC carries consequences that extend well beyond a single failed experiment. The most significant include:

- Irreproducibility: Results that cannot be replicated undermine the scientific record and waste downstream research investment.

- Resource loss: Reagents, equipment time, and personnel hours spent on flawed experiments represent direct financial costs.

- Loss of credibility: Repeated QC failures damage a laboratory’s reputation with funding bodies, journals, and institutional partners.

- Regulatory risk: In regulated research environments, QC failures can trigger audits, sanctions, or loss of accreditation.

For a thorough grounding in quality control in labs, it helps to approach QC not as a checklist but as an ongoing analytical discipline. Reviewing quality control tips designed for active research settings can also accelerate practical implementation.

Core elements of quality control in research workflows

Now that you understand why QC matters, it is time to explore the essential systems and standards that build a solid foundation for any research lab, whether you manage a multi-person institutional facility or conduct independent research.

A Laboratory Quality Management System (LQMS) provides the structural backbone for all QC activities. An LQMS centers on 12 Quality System Essentials aligned with ISO 15189 standards, covering everything from document control to customer focus and process improvement.

| Quality system essential | Core function |

|---|---|

| Documents and records | Version control, traceability |

| Organization | Roles, responsibilities, accountability |

| Personnel | Training, competency assessment |

| Equipment | Calibration, maintenance logs |

| Purchasing and inventory | Supplier qualification, reagent tracking |

| Process control | SOPs, method validation |

| Information management | Data integrity, secure storage |

| Occurrence management | Incident reporting, CAPA |

| Assessment | Internal audits, external reviews |

| Process improvement | Continual review cycles |

| Customer focus | Stakeholder feedback integration |

| Facilities and safety | Environmental monitoring |

ISO 15189 covers both internal QC and external quality assessment, with the frequency of each activity determined by method stability and risk level. For independent researchers, full ISO 15189 certification may not be feasible, but adopting its principles selectively provides a measurable improvement in data reliability.

To establish QC in a new or solo research setup, follow these steps in order:

- Define your critical measurements and identify which analytical steps carry the highest error risk.

- Draft SOPs for each critical process, including reagent preparation, instrument operation, and data recording.

- Select appropriate control materials that match the matrix and concentration range of your samples.

- Establish baseline performance by running controls repeatedly to calculate mean and standard deviation values.

- Implement a Levey-Jennings chart to visualize control data over time and detect drift or shifts early.

- Schedule regular review intervals to evaluate QC data and update procedures when performance trends change.

Pro Tip: Start with simple controls such as SOPs and Levey-Jennings charts before adding advanced statistical frameworks. Complexity added before baseline performance is understood tends to obscure problems rather than reveal them.

Maintaining lab water quality standards is one area where QC implementation pays immediate dividends, since water purity directly affects reagent performance. Equally, structured lab consumables management reduces the variability introduced by inconsistent or expired materials.

Essential QC techniques: From method validation to real-time monitoring

With frameworks in place, the focus shifts to the hands-on techniques that provide actionable QC feedback every day, from sample preparation through to data analysis.

Method validation is the process of confirming that an analytical method consistently measures what it is intended to measure, within defined performance limits. Method validation ensures analytical reliability and is especially critical after any process change, such as a reagent lot substitution, instrument recalibration, or protocol revision. Key parameters evaluated during validation include accuracy, precision, linearity, limit of detection, and specificity.

The most commonly used daily QC checkpoints in a research laboratory include:

- Control materials: Samples with known values run alongside unknowns to verify instrument and method performance.

- Levey-Jennings charts: Graphical plots of control values over time, with mean and warning/rejection limits marked at defined standard deviation intervals.

- Westgard rules: A set of statistical decision rules applied to Levey-Jennings data to determine whether a run should be accepted or rejected.

- Duplicate testing: Running the same sample twice to assess within-run precision.

- Calibration verification: Confirming that instrument calibration remains valid using traceable reference materials.

Levey-Jennings charts and Westgard rules are the most widely adopted tools for detecting both systematic and random errors in laboratory measurements. The 1:3s rule triggers rejection when a single control value exceeds three standard deviations from the mean, signaling a probable systematic error. The R:4s rule flags a random error when two consecutive controls differ by more than four standard deviations within the same run.

Consider a practical scenario: you are running an immunoassay and notice that your high-concentration control has trended upward over five consecutive runs without exceeding the 2s warning limit. A Levey-Jennings chart makes this drift immediately visible; without it, the trend would likely go unnoticed until a rejection event occurs. Early detection at the trend stage is far less disruptive than corrective action after a failed run.

Pro Tip: Document every deviation from expected control performance, not just out-of-range results. Borderline values and near-misses often precede systematic failures and provide the earliest warning that a process is drifting.

Applying aseptic techniques during reagent preparation and following reagent handling best practices are foundational to ensuring that QC results reflect true analytical performance rather than contamination artifacts. Labware purity is equally relevant, as trace contaminants in consumables can introduce systematic bias that no statistical rule will distinguish from a genuine analytical shift.

Common challenges and practical solutions in real-world research settings

Even with best practices in place, day-to-day research presents unique hurdles that require flexible, evidence-based responses rather than rigid adherence to ideal-case protocols.

One of the most frequent disruptions is a reagent lot change. When a new lot of a critical reagent is introduced, it is not sufficient to assume equivalence with the previous lot. Reagent lot changes require verification using a side-by-side comparison with the previous lot, and patient or split samples can serve as QC surrogates when commercial control materials are unavailable. This approach preserves QC continuity without requiring expensive dedicated materials for every lot transition.

When commercial QC materials are genuinely not available, acceptable alternatives include:

- Retained patient samples with previously characterized values, stored under validated conditions.

- Split samples divided from a well-characterized research specimen and run across multiple analytical sessions.

- Spiked samples prepared by adding a known quantity of analyte to a blank matrix.

A more systemic risk arises from over-reliance on QC alone. QC detects errors after they occur; it does not prevent the conditions that generate them. Labs that skip QA entirely may pass individual QC checks while accumulating process vulnerabilities that eventually produce catastrophic failures. Integrated QA/QC combined with continual improvement is the only model that addresses both symptoms and root causes.

Signs that your QC process needs revision include:

- Frequent out-of-control events without an identifiable root cause.

- Inconsistent performance across different operators or shifts.

- Reagent or lot-specific failures that are not captured in change management records.

- QC results that are consistently near warning limits without triggering formal review.

- Absence of trend analysis in periodic management reviews.

Pro Tip: Schedule routine audits and management reviews at fixed intervals, such as quarterly, rather than only in response to failures. Creeping degradation in QC performance is rarely visible in individual run reports but becomes apparent when data is reviewed longitudinally.

Sourcing certified lab supplies and applying defined reagent standards for results reduces the frequency of lot-related QC disruptions by ensuring baseline material quality before analytical work begins.

The uncomfortable truth: Why most labs underestimate the power of integrated QA/QC

There is a persistent tendency in research settings to treat QC as sufficient on its own. Run the controls, check the charts, accept or reject the run. It feels rigorous because it is quantitative and visible. But QC treats symptoms, not root causes.

Some view QC as enough, but only integrated systems that incorporate Corrective and Preventive Action (CAPA) frameworks truly succeed over time. CAPA requires not just identifying that an error occurred, but systematically investigating why it occurred and modifying the underlying process to prevent recurrence. Without this loop, the same failures reappear in different forms.

The labs and independent researchers who achieve sustained reproducibility are not those with the most sophisticated instruments. They are the ones who design systems with built-in feedback, where QA prevents the conditions that QC would otherwise have to catch. Reviewing advanced QC strategies with this integrated perspective in mind is a practical starting point for building future-proof reliability rather than mere compliance.

Enhance your lab’s quality control with proven solutions

Applying the frameworks and techniques covered in this article is significantly more effective when the underlying materials meet verified purity and consistency standards. Variability introduced at the reagent or consumable level creates noise that no statistical tool can fully compensate for.

At Herbilabs, we supply research-grade reagents, sterile diluents, and bacteriostatic water manufactured under strict purity controls to support demanding QC environments. Whether you are implementing a new LQMS or refining an existing workflow, our resources on how to select laboratory reagents and the role of high-purity reagents in reproducible results provide practical guidance for your next steps. Our reagent handling best practices guide is also available to help you maintain material integrity from receipt through use.

Frequently asked questions

What is the difference between quality assurance and quality control in research?

Quality assurance is process-oriented and preventative, establishing the systems and conditions that support reliable outputs, while quality control is product-oriented and focuses on detecting and correcting errors in specific results or batches.

How can independent researchers perform effective quality control?

Start by standardizing processes with SOPs, then implement basic QC tools such as Levey-Jennings charts, and document all control results consistently to build a performance baseline that supports trend detection over time.

What are Levey-Jennings charts and how are they used in quality control?

Levey-Jennings charts are graphical tools that plot sequential control values against predefined mean and standard deviation limits, enabling researchers to detect systematic drift or random error patterns before they affect reported results.

How does ISO 15189 influence quality control in labs?

ISO 15189 establishes requirements for both internal QC and external quality assessment in laboratory settings, specifying that the frequency and scope of each activity should be calibrated to the stability and risk profile of the analytical method in use.

What should you do when quality control materials are not available?

When commercial controls are absent, patient or split samples with previously characterized values provide a viable alternative, ensuring that QC continuity is maintained without interrupting the analytical workflow.